Will AI Really Replace Cybersecurity Jobs? What 2026 Data Tells Us

If AI were truly replacing cybersecurity professionals, you would expect the global skills shortage to be shrinking. However, the opposite is happening.

AI is reshaping cybersecurity, but it is not replacing cybersecurity jobs. Instead, it is changing the skills, priorities, and tools professionals need to succeed.

As AI-powered cyber attacks become more sophisticated and organisations face ongoing cybersecurity skills gaps, demand is growing for practitioners who can combine cyber security knowledge, AI literacy, and more.

For anyone looking to future-proof a career in cybersecurity but worried about being replaced by AI, these are important things you need to know:

AI is raising the stakes, not removing humans

AI has become a force multiplier for both cyber attackers and defenders. Firebrand Training’s recent UK‑wide survey of senior leaders found that more than three‑quarters believe AI is increasing cyber risk for their organisation, yet only just over a quarter say they feel fully prepared to withstand AI‑enabled attacks.

These fears are not completely unfounded. In the same Firebrand research, nearly half of organisations reported at least one cyber attack in the previous year, and the majority of those victims were hit repeatedly.

IBM’s 2024 Cost of a Data Breach Report echoes this picture globally, with the average breach cost climbing to around USD 4.88 million and a significant proportion of organisations reporting major operational disruption when incidents occur.

Under this level of pressure, the practical question is no longer “Will AI replace cyber teams?” but “How quickly can cyber teams learn to use AI effectively?”

The skills gap means humans are more critical than ever

If AI were truly replacing cybersecurity professionals, you would expect the global skills shortage to be shrinking. However, the opposite is happening.

EU studies of the cybersecurity workforce estimate a worldwide shortfall of roughly four million professionals. The gap between the number of people in cyber roles and the number that organisations say they actually need.

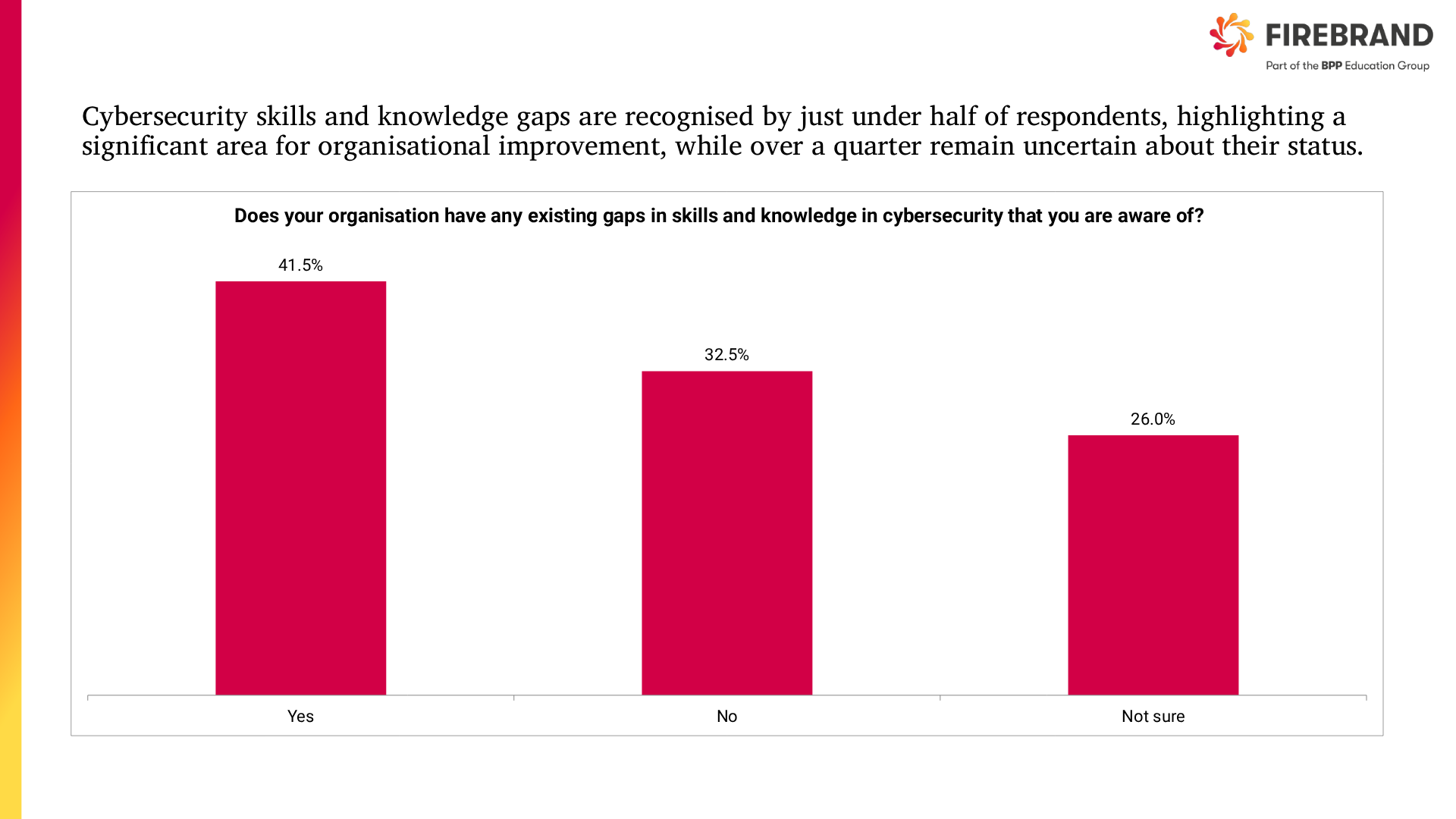

Closer to home, Firebrand’s 2026 survey on the UK cybersecurity skills gap across sectors such as energy, financial services, retail, telecoms, professional services and IT shows that nearly half openly admit to significant gaps in high‑end cyber skills. The most acute shortages are in areas like risk controls, information security, incident response and infrastructure security. These are precisely the domains where human judgement, prioritisation and communication matter most.

Cybersecurity skills gap affects the confidence of organisations

To make matters worse, just under half of the surveyed organisations experienced at least one cyber attack in the past year, and most of those suffered multiple incidents, underlining how costly these gaps really are.

Rather than eliminating roles, AI is colliding with an already‑strained talent pipeline. For most organisations, this means they need more skilled people who understand AI and security, not fewer.

How AI supports cyber teams and where it still needs people

Modern security operations centres are already using AI and machine learning at scale, often without labelling it as such in SIEM platforms, EDR tools, SOAR workflows and cloud security analytics.

IBM’s research shows that organisations which apply security AI and automation extensively can cut breach‑related costs by around USD 2.2 million compared with those that do not. It is a huge incentive to automate wherever it is safe to do so.

Here is how that typically plays out in practice:

| Security capability | What AI can take on | Where humans add the most value | Skills to build for the AI era |

| Continuous threat monitoring | Correlates huge volumes of telemetry to highlight unusual patterns and risky activity in near real time. | Judging which signals matter, understanding attacker intent, and deciding when to escalate. | Threat hunting, SOC analysis, cyber threat intelligence |

| Incident handling and recovery | Automates playbook steps such as isolating endpoints, blocking IPs, and enriching alerts with context. | Owning the overall response, balancing business impact, and coordinating technical and business stakeholders. | Incident response leadership, crisis management, communication |

| Behaviour and identity analytics | Builds baselines for users and devices, and flags behaviour that deviates from the norm. | Interpreting behaviour in light of roles, policies and culture, and spotting subtle insider or account‑takeover risks. | Identity and access management, behavioural analytics, policy design |

| Vulnerability and configuration management | Continuously scans infrastructure and cloud environments, grouping and ranking technical findings. | Prioritising what to fix first, validating changes, and agreeing realistic timelines with product and operations teams. | Secure configuration, vulnerability management, stakeholder engagement |

| Phishing and human‑risk defence | Analyses message content, metadata and tone to filter likely phishing and malicious links. | Designing awareness campaigns, coaching high‑risk users, and investigating sophisticated social engineering. | Security awareness, social engineering defence, training delivery |

Entry‑level cybersecurity work will change, not vanish

Where AI is likely to have the most visible impact is at the junior end of the market. Repetitive, manual security tasks (trawling through low‑value alerts, compiling simple reports, running routine scans) are exactly the kind of work that AI and automation will streamline or absorb.

That does not mean there will be “no jobs for graduates” in cybersecurity. It does mean that entry‑level practitioners will be expected to operate at a higher level, sooner. Early‑career analysts will need to:

- Use AI‑enabled tools confidently, rather than working around them

- Interpret results instead of simply collecting them

- Communicate clearly with non‑technical stakeholders about what an alert or risk actually means

- Formally train and get certification to accelerate that leap

On that last point, the good news is that structured programmes – like Firebrand’s accelerated pathways into security operations, governance and cloud security – help early‑career professionals move beyond basic, automatable tasks into roles where they are actively interpreting AI outputs, making decisions and designing controls.

Cross‑functional skill sets will be in high demand

One of the strongest trends emerging from the AI shift is the rise of hybrid roles. Cybersecurity professionals who combine technical foundations with deep domain knowledge in another discipline.

Firebrand’s own guidance for professionals moving from finance into cybersecurity highlights how skills in risk, controls, regulation and data management map naturally onto cyber roles in governance, compliance, fraud prevention and security operations. The same logic applies to people coming from law, audit, engineering, healthcare or operations: the more you understand the underlying business, the more valuable your security expertise becomes when AI is involved.

As AI takes over some of the low‑level pattern recognition, employers are increasingly seeking people who can bridge technical findings and commercial impact. They also look for people who can navigate regulatory expectations around AI, privacy and data protection.

This is good news if you already have a career behind you and are considering a pivot into cybersecurity. You are not starting from nothing. You are re‑packaging experience that AI cannot replicate and then adding targeted cyber skills and certifications on top.

New AI‑era cybersecurity roles are emerging

The narrative that “AI will kill cyber jobs” also ignores the roles that AI itself is creating.

As organisations deploy AI across their operations, they need specialists who can secure models, data pipelines and AI‑driven services. Emerging and fast‑growing roles include:

- AI security specialists: Focusing on model integrity, prompt injection, data poisoning, and securing AI‑enabled applications

- Cybersecurity data scientists: Building and tuning detection models, working with large data sets, and collaborating closely with SOC and threat intelligence teams

- Data security and governance leads: Responsible for how sensitive data is collected, labelled, stored and used in AI systems, and for aligning that practice with regulations such as GDPR

- Cyber risk, strategy and communication roles: Translating AI‑related cyber risk into board‑level decisions, investment cases and organisational change

As Firebrand’s analysis of the UK skills gap shows, the expanding attack surface, rising breach costs and increasing use of AI by criminals are driving demand for more, not fewer, people in security.

The mix of roles is shifting towards architecture, governance, data protection and strategic oversight. These are areas in which human judgment is central.

Why AI literacy is now a core cybersecurity skill

If AI is not your replacement, what is it? Increasingly, it is part of your toolkit.

For individual practitioners, this means:

- Learning how AI‑driven security products actually work. Understanding their assumptions, limitations, and typical failure modes is crucial for avoiding blind spots.

- Building a healthy scepticism about AI outputs. Treat AI‑generated findings as inputs to human analysis, not ground truth. False positives, model drift and biased training data all need to be actively managed.

- Using AI to amplify, not replace, your expertise. That might mean drafting initial playbooks or reports with a large language model and then refining them, using AI to triage alerts, or generating “what if” scenarios to support tabletop exercises.

- Just as spreadsheets did not eliminate accountants, AI will not eliminate cybersecurity professionals. It will, however, make a clear distinction between those who can orchestrate and oversee AI‑enabled defences and those who cling to purely manual ways of working.

How to future‑proof your cybersecurity career in the age of AI

Putting this all together, the question for security professionals is not “Will AI replace me?” but “How do I stay ahead of the curve?” A practical roadmap looks something like this:

Double down on fundamentals.

Make sure your grasp of networks, operating systems, identity, encryption and common attack techniques is rock solid. AI tools are built on top of these basics; they do not replace them.

Get hands‑on with AI‑enabled security tools.

Explore the AI features in your SIEM, EDR, cloud security and email security platforms. Experiment in safe environments so you understand what the tools can and cannot do.

Strengthen your “bridge” skills.

Invest in communication, stakeholder management and the ability to frame security decisions in business language. These are precisely the skills that become more valuable as automation spreads.

Pursue certifications that signal you can operate at this new level.

Industry‑recognised certifications – in security operations, cloud security, governance or AI‑related disciplines – remain one of the clearest signals to employers that you have both the knowledge and the discipline to thrive in demanding environments.

Providers like Firebrand specialise in accelerated, immersive training that can help you close specific gaps quickly and credibly.

Consider a hybrid profile.

If you have experience in finance, law, compliance, operations or another business function, lean into that. Combining domain expertise with cybersecurity and AI literacy is likely to be one of the most resilient career bets of the next decade.

Get certified to get ahead.

If you want to stay employable in this new landscape – or break into it – you need credentials that prove you can combine solid technical fundamentals with the ability to harness AI safely.

Explore Firebrand’s accelerated cybersecurity certifications and AI‑ready training pathways to build the skills employers are crying out for and turn this wave of change into an opportunity, not a risk.

Explore Firebrand certifications